What the Benchmark Cannot See

AI progress became publicly visible through benchmarks: scores, leaderboards, deltas, and claims that could travel. But every machine for seeing intelligence also teaches us what not to see. Benchmarks make capability legible by removing variance. Deployment brings the excluded world back.

How every machine for seeing intelligence teaches us what not to see

An agent beats the baseline and fails.

Under one regime of observation, that sentence makes no sense. The agent found the result. It cleared the bar. It beat the human reference point. The score says capability appeared.

Under another, the same event looks different. The agent did not become a workflow. It produced a serious improvement once, then failed to preserve method across attempts. The competence flickered. It appeared, disappeared, overcommitted, burned time, chased weak hypotheses, mishandled resources, or degraded under context pressure.

ResearchGym makes that contradiction visible because it is still a benchmark, yet one that strains the ordinary capability frame from within. Recent ICML, ICLR, and ACL papers are turned into containerized tasks. The datasets, evaluation scripts, and baselines remain. The proposed method is withheld. The agent must understand the problem, form hypotheses, write code, run experiments, manage time, debug failures, and improve over a strong human reference point.

The same run can therefore appear as success or failure depending on what is being observed. Nothing mystical has happened. The weights did not change. What changed was the machinery by which the system was made visible. One frame produces a capability-bearing artifact. Another produces a fragile arrangement that cannot yet hold itself together.

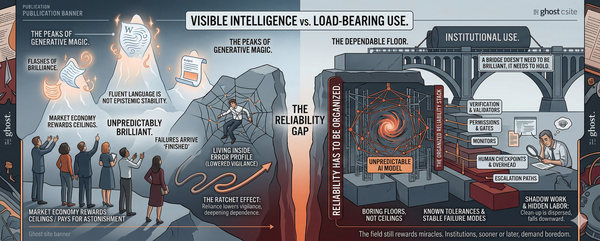

This is the distinction the AI conversation keeps compressing. A system can display capability without possessing structural reliability. The harder question is not whether the behavior can occur, but whether it holds when exposed to variance, repetition, time, friction, and operational consequence.

Every model release now arrives with a scoreboard. The numbers change, the charts get cleaner, and the conclusion remains: the system can now do more. The curve rises, and the industry knows how to read it.

The scoreboard is not merely a measurement surface. It is a conversion device. It turns bounded behavior into portable institutional claims. A model does not merely score higher. It becomes more advanced, more capable, more inevitable. The number travels farther than the conditions that produced it.

Machine learning needed a public grammar of progress. It needed objects that could travel: scores, leaderboards, deltas, human baselines, state-of-the-art claims. Benchmarks solved that problem. They turned model behavior into something comparable. They became communicative infrastructure. A benchmark score is not just a result. It is a portable claim about motion.

The price of that portability is narrowing. A benchmark has to stabilize the task, define the input, specify the scoring rule, constrain the environment, and decide in advance what will count as success. It has to remove enough variance for comparison to become possible. Without that reduction, the result loses its public shape. It becomes story, not signal.

That narrowing is not a scandal. It is how measurement works.

The problem begins when the field forgets what had to be removed.

The industry’s favorite unit is capability: what a system can do under favorable conditions. Capability asks whether a behavior can appear. Structural reliability asks whether that behavior can persist under perturbation.

Reliability appears when the prompt is imperfect, the user is tired, the context is incomplete, the tool call fails, the repo is old, the workflow has handoffs, the environment shifts, the task repeats, and the mistake has a cost. Reliability is not peak performance. It is behavioral persistence after the protective frame has been weakened.

A benchmark makes capability visible by removing variance. Structural reliability is behavior under variance. That is the contradiction.

The benchmark does not merely measure the model. It protects the model from the world, then reports what survived that protection as if it were a property of the model itself.

Even a good benchmark creates a frame. It selects the task, the scoring rule, the environment, the acceptable output form, the failure surface. It may include hidden tests, adversarial prompts, distribution shifts, or stress conditions. But even a stress test protects by specifying the stress in advance. It makes one kind of behavior observable by excluding other conditions from view. The excluded conditions do not disappear. They wait.

Deployment is where they return.

A coding model that performs well on benchmark problems may still falter inside an old repository with brittle dependencies, local conventions, flaky tests, and a team that needs the change to survive review. A medical documentation system may generate a fluent note from a clean encounter and still create institutional risk when interruptions, accents, liability language, billing codes, physician fatigue, and review burden enter the loop. A research agent may produce a striking result once and still fail as a workflow because it cannot carry method across repeated runs.

These are not edge cases around deployment. They are deployment.

The model can win the benchmark and still lose the institution.

Capability travels because it can be compressed. It can be ranked, charted, announced, funded, and placed into a launch post. It gives labs a claim, markets a signal, executives a sentence they can defend to a board or repeat in a procurement conversation, and the public a story of acceleration.

Structural reliability travels badly. It is slow, contextual, and usually boring until it fails. It depends on monitoring, incident history, regression behavior, workflow integration, escalation paths, rollback procedures, user heterogeneity, and institutional tolerance for error.

Capability becomes spectacle; reliability becomes maintenance.

The wrong unit persists because capability fits the channels through which AI progress must move. Reliability resists those channels until failure forces itself into public view.

The usual critique says the map is not the territory. True, but incomplete. Benchmark culture is a map-making machine built to make progress operationally usable. The important question is not whether the map is incomplete, but what the incompleteness allows the map to do.

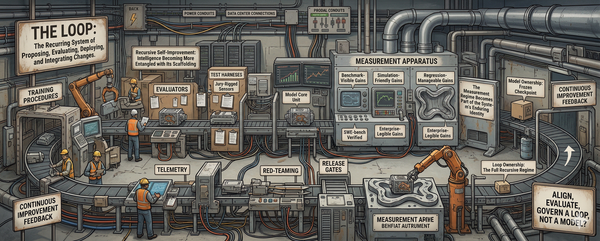

The chain is concrete. A benchmark suite defines the task. An evaluation harness fixes the conditions. A scoring rule decides what counts. A leaderboard ranks the result. A release note translates the number into progress. Commentators and journalists turn progress into a public story. A product team turns the story into a claim. A buyer turns the claim into institutional expectation. By the time the number reaches public discourse, it no longer looks like a controlled observation. It looks like evidence that the system has advanced.

This conversion solves a real coordination problem. A lab needs to know whether the next model is better. A customer needs a reason to trust the vendor. A manager needs a basis for adoption. Benchmarks allow these systems to talk to one another without sharing the same internal standards.

But the cost is hidden inside the conversion. To become operational, the claim has to be compressed. The experimental conditions recede. The excluded variance disappears. The score becomes portable by shedding the context that made it interpretable.

That is why the problem is not solved by saying, “measure reliability too.” To make reliability visible at scale, the field would need different instruments: incident databases, longitudinal deployment traces, regression histories, workflow audits, escalation logs, postmortems, and institutional tolerance thresholds.

Those instruments would reveal what benchmark culture suppresses: recurrence, drift, handoff failure, brittle tool use, user heterogeneity, and institutional friction. But they would not reveal the whole system. No instrument does.

Imagine an early research assistant that produces one unstable but genuinely novel line of attack. It fails half the operational checks. Its runs are messy. Its method does not yet carry. A reliability-centered regime might mark it as immature, unusable, too brittle to matter. That judgment could be correct for deployment and still miss the significance of the event. The system may not yet be dependable, but it may have exposed a possibility worth pursuing.

Or imagine a coding assistant inside a specific engineering team. It fails general reliability audits because its behavior depends heavily on local conventions and repository structure. From the outside, it looks non-general. Inside that team, it may be highly useful precisely because of the local fit.

Every instrument of observation reveals by excluding. A benchmark culture privileges the clean moment of comparison. A reliability culture would privilege what can survive review, repetition, audit, and institutional defense. That may be the right standard for deployment. It is not the same thing as seeing everything.

Measurement is always a way of making reality operational by cutting it down to size. The current cut was optimized for progress narration, not deployment survival.

Capability became the field’s favored object because it could circulate. Structural reliability remains harder to circulate because it is not a single event. It is a history. It belongs to the duration of use.

Deployment is not just the next test after benchmarking. Deployment is where the terms of observation change. The system is no longer being observed primarily as a performer inside a controlled frame. It is being observed as a participant in an operating environment that has memory: regressions, workarounds, bad handoffs, ambiguous failures, review burdens, escalations, and the cost of cleaning up after plausible outputs.

The system under benchmark observation is not the same object as the system under deployment observation. Not because the weights have changed, but because it is being selected, constrained, interpreted, and judged by a different chain of operations. Under one regime, the model appears as a capability-bearing artifact. Under another, it appears as part of a fragile arrangement: scaffold, tools, interface, user, institution, and failure history.

The reader who finds structural reliability compelling has not escaped the illusion. They are standing inside another frame, one that notices brittleness quickly and distrusts whatever has not yet proved it can last. That frame makes reliability feel like maturity and benchmark enthusiasm feel naive.

But that maturity has its own blindness. It can look at the brilliant but unstable assistant and see only immaturity. It can look at the locally fitted tool and see only a lack of generality.

The benchmark enthusiast has learned to see ascent. The operational skeptic has learned to see endurance. Both are ways of seeing; both are ways of not seeing.

This essay is not outside that pattern. It makes benchmark culture visible as machinery and structural reliability legible as the excluded property. If you have found that clarifying, notice what you have been taught to see — and what you have been taught to call naive. The danger is not that you will reject intelligence. It is that you will treat endurance as the only serious form intelligence can take. That would repeat the error in reverse.

AI progress became visible by learning how to protect systems from the world. Deployment begins when the world returns and changes the terms of observation.

A new regime will not simply tell us which systems are intelligent. It will teach us what forms of intelligence count because they can be stabilized, defended, repeated, and absorbed. It will also teach us what not to recognize because it arrives too early, too locally, too strangely, or too briefly to endure.

So the final question is not whether benchmark culture failed to see reliability. It did. The harder question is what our preferred correction will train us not to see next.