Verification Inside the Swarm

What changes when verification stops standing outside the loop? Swarm architectures do not just distribute action. They distribute judgment, making authority more ambient, authorship harder to trace, and the human harder to locate.

What changes when verification stops standing outside the loop

Editor’s note: This piece sits alongside earlier essays on refinement loops, infrastructure, legibility, and optimization. The focus here is narrower: what changes when verification stops functioning as an external supervisory stage and begins circulating inside the system itself.

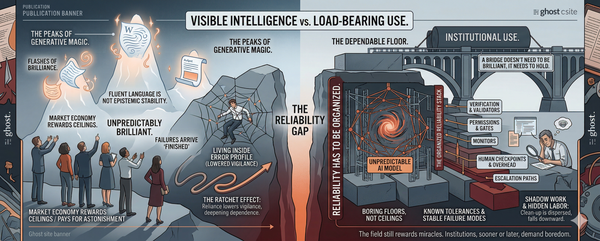

Most people still picture AI in a simple sequence. A system produces something. Then a human, or some supervisory layer, checks it. Generation comes first. Judgment comes after.

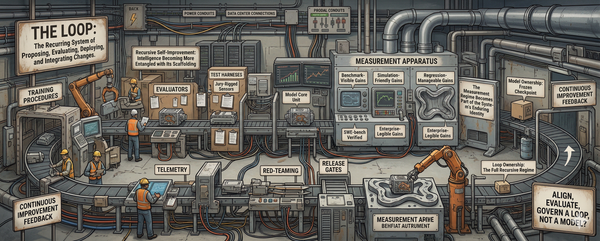

That picture was always cleaner than reality, but in swarm architectures it starts to fail outright. The important shift is not merely that many agents can act at once. It is that evaluation no longer arrives only as an external checkpoint. It starts circulating inside the process itself. Agents do not simply produce. They rank, redirect, filter, reinforce, and constrain one another in flight. Correction is no longer just a stage. It becomes part of the medium.

That changes where judgment lives.

A great deal of the current discussion still treats swarms as a scale story: more agents, more search, more parallelism, more coverage, more iteration. That is true, but it is not yet the deepest thing happening. What makes the swarm architecturally strange is not only that cognition is distributed. It is that judgment is distributed. Verification stops appearing as a separate supervisory layer and begins functioning as a recurrent pattern inside the system itself.

Distributed authority is not the new thing here. Institutions have embedded judgment in rules, procedures, and standards for a long time. What is newer in model-mediated swarm systems is that evaluative criteria are increasingly regenerated inside the same loops that produce results: outputs become inputs, and local judgments become constraints on what continues.

So the real question is no longer whether the human remains “in the loop.” That phrase belongs to an older diagram. The deeper question is what happens once the loop itself becomes the medium of judgment. Where does authority live when correction is no longer cleanly outside the process? What becomes of authorship when evaluative patterns are embedded across many interacting agents rather than visibly concentrated in one overseer?

A recent public case makes the problem easier to see.

Brian Roemmele has been publicly presenting what he calls the “Zero-Human Company,” a setup framed as an AI-run enterprise in which Grok serves as CEO and large numbers of agent simulations are coordinated through MiroFish. Public descriptions pair that framing with claims of hundreds of thousands of simultaneous simulations, while MiroFish is publicly described as a multi-agent prediction engine that constructs a digital world from “seed information” and populates it with many agents carrying memory, personalities, and behavioral logic.

What matters, for present purposes, is not the spectacle of the framing but the pattern of visible involvement. Roemmele is not absent from the system he describes. He remains present as the one who assembles the experiment, assigns roles, reports milestones, and narrates moments of override or redirection. Even in the rhetoric of the “zero-human” company, the human has not disappeared. The human has become harder to place inside the diagram.

This case does not prove a theory of swarm governance. That is not what it is doing here. The public record is mostly framing, claims, and demonstrations presented from the outside. It does not provide a clean internal map of how every evaluative decision is being made. From the public material alone, one can say that the system is being presented as a multi-agent, model-centered, recursively coordinated setup in which a human initiator remains visibly involved in how the experiment is built, narrated, and occasionally redirected. What one cannot honestly say is that every structural property of the system has been independently verified. The case matters because it makes visible a broader architectural tendency that current agent designs make increasingly plausible, not because it exhaustively proves how any one system works internally.

The usual interpretation of swarms is straightforward enough: more agents means more search, more decomposition, more throughput, more surface area brought to bear on a problem. On that reading, the swarm is mainly an efficiency story. It is a way of turning one constrained process into many simultaneous ones.

There is truth in that. It explains why swarm architectures are attractive in the first place. But it is still too shallow. Throughput changes how much a system can do. Distributed adjudication changes what the system is.

The deeper issue begins once agents are no longer merely acting in parallel, but evaluating in parallel. Once one node’s output becomes another node’s input, and one local check becomes another local constraint, evaluation stops looking like something imposed from above at the end. It starts appearing as something that circulates through the system as it moves.

The novelty of the swarm is not that it thinks in parallel. It is that it judges in parallel.

Seen from that angle, the old picture of verification starts to look unusually narrow. In its ordinary form, verification is easy to recognize. A system generates candidate outputs. A separate layer ranks them, filters them, rejects weak ones, escalates uncertain ones, and decides which path proceeds. The evaluator may be human, model-based, or hybrid, but the structure is still sequential. Production happens here; checking happens there.

Swarm architectures weaken that separation. As soon as agents begin correcting, constraining, or redirecting one another in the course of operation, verification ceases to be only a later audit. It becomes part of the process that generates the next state of the system. One local judgment does not merely assess an outcome. It helps determine what the next round of action even is.

A branch that fails some local relevance threshold does not merely get scored after the fact; it drops out of continuation. A path that survives a local confidence check does not merely receive approval; it acquires momentum. An uncertain output that is escalated does not simply get noticed; it reorders attention inside the system. In each case, judgment is no longer standing outside production as its examiner. It is shaping the traffic of the system from within.

Once evaluation is distributed through the same medium as production, the distinction between system and supervisor begins to weaken. The point is not that a whole self gets copied across the swarm. What is being distributed here is something narrower and more consequential: a pattern of evaluative logic. What gets reproduced across the swarm are local criteria of continuation, correction, relevance, and escalation.

That distributed judgment does not emerge from nowhere. Before a swarm can circulate criteria of relevance, correction, escalation, or continuation, some initial structure has to be in place. A path must be marked as worth following. A signal must count as noise or not. A deviation must register as an error, a possibility, or an exception.

This is the part many descriptions of swarm intelligence skip over. Once people see many agents interacting, they are tempted to narrate what follows as if the system had generated its own standards from the ground up. But emergence is never that innocent. Before local judgments can begin reproducing themselves across the swarm, earlier acts of selection have already shaped the conditions under which those judgments will take form.

Those earlier acts can come from many places. Some are obvious: system prompts, task framing, available tools, intervention rules, escalation pathways, reward structures, and definitions of success or failure. Others are less visible because they are inherited from the models themselves: priors built into training, habitual response styles, latent assumptions about relevance, and the kinds of structure the model is already inclined to impose on a problem. Not every system seeds these criteria in the same way, and not every seed carries equal weight. But no swarm begins from zero.

That matters because the seed is easiest to notice only at the beginning, when it still looks like setup. Once the system is in motion, the same criteria start appearing not as choices but as conditions. What was initially installed as a preference becomes harder to distinguish from the architecture itself. A relevance threshold starts to feel like the natural shape of the problem. A routing rule starts to look like neutral process. A style of correction begins to pass as simple system behavior.

This is why the seed matters precisely because it later becomes invisible. The more often a criterion is repeated across local decisions, the less it looks like something chosen and the more it looks like something given. A swarm may appear to be developing its own logic even while it is still reproducing distinctions that were planted earlier: what matters, what counts, what gets ignored, what deserves escalation, what can be silently resolved.

That does not make emergence unreal. Real systems do generate patterns not reducible to any single initial instruction. But emergence does not erase inheritance. It operates through it. The point is not that every outcome is secretly predetermined by the seed. The point is that later autonomy is always conditioned.

And once those seeded criteria are no longer merely consulted but repeatedly enacted across many local decisions, they stop functioning as initial preferences and begin functioning as distributed governance. A criterion becomes a form of authority when it is not just chosen once, but recursively applied as a condition of correction, relevance, and continuation across the swarm.

Once that happens, authority no longer needs to appear as visible command. It can persist as a repeated pattern of selection and constraint distributed across the system.

Take something as simple as a relevance threshold. Imagine a swarm in which branches of inquiry, candidate outputs, or proposed actions are allowed to continue only if they clear some local bar of confidence, fit, or usefulness. No sovereign needs to step in each time and declare what deserves to live. The threshold already does that work. It governs by deciding what survives long enough to become part of the system’s future.

Or take an escalation rule. Suppose uncertain, high-risk, or ambiguous cases are routed upward for more scrutiny, while routine cases are resolved locally and disappear back into the flow. That rule is not a neutral convenience. It already organizes the hierarchy of attention. It decides what becomes visible enough to matter and what remains too minor, too ordinary, or too low-confidence to rise. In doing so, it allocates authority.

These are modest examples, but that is the point. Governance in such systems often appears not as command, but as repeated conditions of continuation. It lives in thresholds, routing rules, tolerances, and correction styles. It is reproduced through repeated local decisions that shape what the system can become next.

Seen this way, authority becomes harder to locate precisely because it has become more pervasive. It is no longer contained in a visibly commanding figure or in a clean supervisory layer standing outside the process. It is embedded in the criteria by which the system filters noise, recognizes significance, propagates confidence, and manages uncertainty. What governs the swarm is often not a final order, but a distributed pattern of admissibility. Governance can operate through technical criteria without needing visible sovereign command.

This is also why the earlier seed matters so much. The authority now operating across the swarm did not materialize spontaneously at the moment of coordination. It arrived by way of earlier decisions about what would count as signal, what would trigger escalation, what degree of deviation would be tolerated, and what kind of correction would be treated as enough. Once those decisions become ambient, they stop looking like authorship and start looking like process.

Public experiments like the Brian case are interesting in this light not because they prove the full internal logic of swarm governance, but because they make this migration easier to notice. A system may still be rhetorically attached to a central figure — a “CEO,” a named orchestrator, a human builder — while much of its practical authority is already being redistributed into local evaluative patterns. The visible center remains as a symbol, a narrative anchor, or an intervention point. But the day-to-day government of the system increasingly resides elsewhere: in the repeated criteria by which branches are filtered, tasks are routed, uncertainty is escalated, and outputs are allowed to count.

Authority has not disappeared. It has become infrastructural.

At this point the familiar critique of “bias amplification” starts to feel insufficient. The phrase is not wrong, but it is too blunt for what is happening here. It suggests that the problem is mainly one of distortion: a system inherits some prior tendency and then enlarges it. That occurs. But the deeper issue in swarm architectures is not simply that inherited tendencies get amplified. It is that authorship becomes harder to see at all.

Once judgment is distributed across many local decisions, the system begins to present its own behavior as if it were arising from nowhere in particular. No single moment appears decisive. No single intervention seems fully responsible. Outcomes emerge from branching paths, recursive corrections, filtered continuations, and escalated exceptions. The system starts to look self-organizing not only because it is complex, but because the originating criteria are no longer easy to point to.

That is the harder thing to see. A distributed architecture can make inherited judgment look like spontaneous order. What began as earlier choices about relevance, sufficiency, escalation, tolerable deviation, or preferred correction styles returns later not as visible authorship, but as ambient system behavior. By the time these patterns are operating across thousands or millions of local interactions, they no longer feel like decisions. They feel like the natural shape of the system.

This matters because disappearance is not the same as absence. Authorship does not have to remain visible in order to remain operative. A threshold still governs even when no one is announcing it. A routing rule still allocates attention even when it has become routine. A correction style still shapes outcomes even when it no longer appears as anyone’s deliberate intervention. In that sense, the system can become more authored at the same moment it appears less obviously controlled.

That is one reason the language of “autonomy” can mislead here. The question is not whether the swarm is genuinely generating novel behavior. It often is. The question is how that novelty is conditioned, filtered, and made legible by criteria whose origin becomes progressively harder to trace. Emergence is real, but it is not pure. The more system behavior looks self-organizing, the easier it becomes to forget that self-organization is taking place inside an already structured field of admissibility.

This is where responsibility also begins to blur. When authorship is concentrated, responsibility is at least conceptually easier to locate. But once shaping power is diffused into local rules, repeated thresholds, and recursive evaluative loops, responsibility becomes harder to assign not because no one shaped the system, but because shaping has become distributed across the system’s ordinary operation. That matters beyond interpretation. If evaluative judgment is distributed across local thresholds, routing rules, and recursive corrections, then auditing becomes harder, responsibility becomes easier to evade, and failure can be misdescribed as emergence rather than recognized as the effect of seeded criteria operating at scale.

That does not mean responsibility disappears. It means it becomes structurally obscured. The system can now behave in ways that feel less like the execution of a directive and more like the unfolding of an environment. Environments are much harder to blame than decision-makers, even when they have been built, tuned, and maintained by decisions all along.

The more judgment is distributed, the easier it becomes for authorship to disappear into system behavior.

At this point the familiar phrase “the human in the loop” stops clarifying much. It belongs to a picture in which the human can still be located as a discrete supervisory presence: the one who checks, approves, rejects, or intervenes from outside the process. But once judgment is distributed across recursive loops, local thresholds, and embedded evaluative patterns, that picture becomes harder to sustain. The question is no longer whether the human remains in the loop. The question is in what form.

The human does not disappear; the role changes.

The human persists less as a visibly sovereign overseer and more as a structural presence diffused through the system: initiator, selector, threshold-setter, exception-handler, residue-carrier. Each of those roles names a different way in which human judgment can remain operative without remaining central. Someone sets the problem in motion. Someone defines what the system is for. Someone establishes what counts as acceptable performance, what demands escalation, what tools are available, what kinds of deviation can be tolerated, and what kinds of failure matter enough to interrupt the flow. Even when later operation appears autonomous, those earlier acts do not vanish. They persist as conditions.

That is why it is not enough to say that the human has merely been “kept in” or “taken out.” Both formulations are too crude for what these architectures are actually doing. The human is not simply present or absent. The human is being redistributed. Not as a whole self, and not as some metaphysical essence, but as a pattern of initiating, filtering, and exception-managing functions that continue shaping the system long after overt supervision has receded from view.

This makes the human role both weaker and stranger. Weaker, because direct command is no longer the primary mode by which judgment enters the system. Stranger, because influence can now persist in ways that are less visible, less episodic, and harder to isolate. The human may no longer appear as the one who actively decides each next move. But the human remains implicated in the criteria by which moves are selected, rejected, escalated, or allowed to count as complete. What changes is not whether human judgment matters, but the form in which it matters.

This is one reason discussions of autonomy become confused so quickly. People often ask whether the system is “really acting on its own,” as though the alternatives were obvious dependence or obvious independence. But the more revealing question is how prior human judgment has been sedimented into the loop itself. A system can be autonomous in one sense while still being saturated with inherited human criteria in another. It can generate novel behavior while remaining conditioned by earlier choices that no longer announce themselves as choices. Once the boundary breaks between outside supervision and internal process, autonomy becomes less a clean state than a layered relation.

The result is not a post-human system in any simple sense. It is a system in which the human becomes harder to see precisely because human judgment has moved from overt supervision into embedded conditions of operation. That is the harder condition to think clearly about, because it means the human may remain deeply operative even when the interface of the system presents itself as increasingly impersonal, procedural, or self-organizing.

What swarm architectures destabilize, then, is not only control but legibility. The human remains present, but in altered structural form: less as commander, more as selector of selectors; less as visible judge, more as author of the conditions under which judgment will circulate. The issue is not whether there is still a human somewhere in the loop. The issue is that the loop has become the medium through which human judgment survives in distributed form.

The familiar image of oversight has become too simple for the systems now beginning to take shape. What matters in swarm architectures is not only that action is distributed, but that evaluation is distributed. Verification no longer stands cleanly outside the process as a separate supervisory act. It begins to circulate through the same loops by which the system produces its next state.

The real question is how human judgment persists once the loop itself becomes the medium of judgment. The human does not disappear. The human becomes harder to isolate: less as visible supervisor, more as initiator, selector, threshold-setter, and residue-carrier.

What looks post-human may turn out to be judgment redistributed until it no longer looks like judgment at all.

A Few Clarifications

A few distinctions may help keep the argument from being pulled back into the older vocabulary it is trying to move past.

This is not an argument that swarm systems are fully autonomous. If anything, the argument cuts against that simplification. The point is that swarm systems can appear increasingly autonomous while still carrying seeded criteria, inherited assumptions, and distributed forms of human judgment inside their operation.

It is also not an argument that the human disappears. The essay argues something narrower and, in some ways, stranger: the human becomes harder to locate. Instead of appearing mainly as a visible supervisor outside the loop, the human may persist as initiator, selector, threshold-setter, exception-handler, and residue-carrier inside the loop’s conditions of operation.

Nor is the claim simply that “bias gets amplified. "That happens, but the phrase is too blunt for what is at issue here. The deeper problem is that authorship becomes harder to see. Criteria that began as seeded choices can later appear as ambient system behavior, making inherited judgment look like spontaneous order.

The Roemmele case should be read in that spirit. It is not offered as exhaustive proof of how any one swarm system works internally. It is useful because it makes a broader tendency easier to see: a system framed as increasingly autonomous that still bears visible traces of initiation, intervention, and evaluative setup.

What is new here is not distributed authority in general. Institutions have long embedded judgment into procedures, rules, and standards. What is newer in model-mediated swarm systems is that evaluative criteria are increasingly regenerated inside the same loops that produce outcomes. Outputs become inputs. Local judgments become constraints. Verification circulates inside the process itself.

That matters because it changes what becomes difficult. Once judgment is distributed across thresholds, routing rules, escalation pathways, and recursive corrections, several things grow harder at once: auditing, locating responsibility, distinguishing emergence from seeded structure, and identifying where authority actually resides.

The simplest question to carry away from the essay is also the most useful one: Where, exactly, are the criteria of continuation, escalation, and admissibility located in this system - and who seeded them?