The Physicalization of Intelligence

Elon Musk’s terawatt-scale fab announcement is easy to read as a familiar escalation: more chips, more power, more compute. Recent reporting around Musk’s Terafab plans and Tesla’s chip-production timeline gives that announcement a sharper edge than ordinary scale theater. But that framing is already too shallow. The more important development is not simply that another powerful actor wants more compute. It is that AI is becoming harder to understand in purely algorithmic terms.

AI did not suddenly become physical. It always was. What is changing is that the physical layer is no longer receding into the background of the story. It is becoming one of the main variables that explains who can scale, deploy, and remain ahead. The question is not whether these constraints matter. The harder question is when they stop looking like friction and start determining the shape of the field itself.

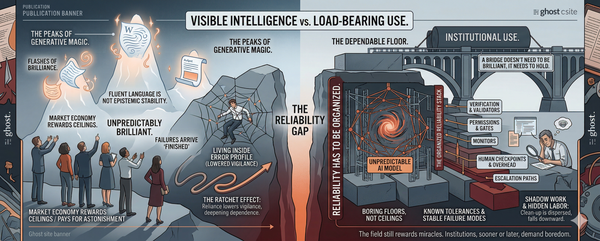

For years, the public language of the field has centered on models, parameters, benchmarks, architectures, and training runs. Those things still matter. But they no longer exhaust what matters. The model remains the visible object. Increasingly, it is not the whole object.

What now matters, and matters more openly than before, is the system surrounding the model: power procurement, fabrication capacity, packaging throughput, cooling, land, interconnect, and the long construction timelines required to turn abstract demand into available infrastructure. Interconnection processes alone have become long enough to shape what can be built when. Data-center water and cooling demands are also becoming more salient in siting and local planning. In that sense, the language of “more compute” has become slightly misleading. It sounds like an input. In practice it names a buildout.

By physicalization, I mean something simple: the pace and shape of AI are being governed more visibly by power, land, cooling, fabrication, and grid access than by models alone.

For most of its public life, AI has been narrated through the model. The central questions concerned parameters, architectures, training runs, benchmark scores, and, more recently, inference tricks. That way of speaking was not wrong. It reflected where the most visible advances were taking place. Progress appeared most clearly in the artifact itself: a system that could write better, reason longer, generate more fluently, or generalize across a wider range of tasks.

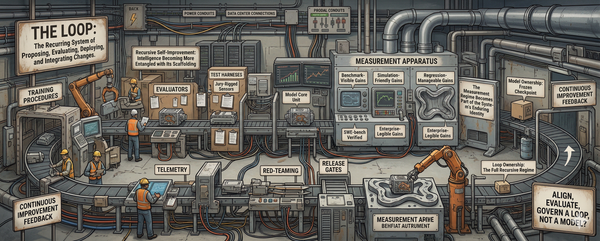

But that picture is becoming less complete. The model remains the most legible object in the system, the thing people can compare, rank, release, or debate. Yet it increasingly sits inside a much thicker operational environment that helps determine what capabilities can actually be trained, deployed, sustained, and improved. The relevant unit is no longer just the model in abstraction, but the industrial system that makes the model possible at scale.

That system includes the obvious elements: chips, power, cooling, networking, and fabrication capacity. It also includes less glamorous but equally decisive constraints: land, substations, transformer lead times, interconnect approvals, packaging throughput, construction sequencing, water availability, and the political permission to build at speed. None of this is glamorous. It is procurement, delay, utility politics, and heat.

Discussion still often proceeds as though a model were the main thing and infrastructure its support layer. Increasingly, the relationship runs both ways. The surrounding system does not merely support intelligence. It conditions it. It shapes how fast a model can be trained, how widely it can be deployed, how cheaply it can be served, how often it can be updated, and how durable its advantages become once competitors try to catch up.

At smaller scales, many of these constraints can be treated as background friction. At larger scales, they become explanatory. A delay in packaging is no longer a supply-chain inconvenience. It is a cap on usable compute. A grid bottleneck is no longer an engineering detail. It is a brake on training, serving, and expansion. A cooling limit is no longer just an operations problem. It becomes part of the strategic landscape. At that point, the governing question is no longer simply what a system can do in principle. It is what the surrounding industrial base can sustain in practice.

What matters is not merely that these constraints exist, but that they slow some actors more than others. Once that happens, infrastructure ceases to be a universal condition and becomes a differentiator. Once the pace of intelligence is filtered through buildout, the question of who can think at scale begins to converge with the question of who can build at scale.

This does not mean that algorithmic progress has stopped mattering. A clever architecture still helps. A better optimizer still helps. More efficient inference still helps. Efficiency gains can delay, soften, or redistribute bottlenecks. They do not remove the need to pass through them. As the scale of ambition rises, those improvements no longer float above the substrate. They are filtered through it. The field still talks as though intelligence were mostly a property of models. Increasingly it is also a property of the environments that produce, power, cool, connect, and maintain them.

Announcements like Terafab make that shift harder to ignore. They do not merely signal appetite for larger systems. They reveal that the path to larger systems now runs through an industrial stack dense enough to shape the competitive order of the field itself.

The phrase “more compute” has become one of the field’s great abstractions. It sounds clean, almost mathematical, as though one were naming an input that could simply be increased by will, capital, or necessity. But at the scales now being imagined, compute is not a quantity in the abstract. It is a buildout. To ask for more of it is to ask for fabs, packaging, substations, cooling systems, fiber, land, permits, transformers, construction labor, network design, and time.

That matters because each of those elements obeys a different clock. Capital can move quickly. Permitting often cannot. Chips can be designed on one timeline, fabricated on another, and packaged on a third. A data-center shell can be erected before power is available to fill it. Steel can be in the ground while the actual capacity remains trapped in queue studies, transformer procurement, and utility timelines. Compute therefore does not scale as a single variable. It scales as a coordination problem across multiple industrial layers, each with its own bottlenecks, delays, and failure modes.

This is one reason the rhetoric of escalation can mislead. A terawatt announcement sounds like an answer. In practice it is a declaration of dependence on an entire supporting order. The relevant question is not simply whether demand for compute exists. It plainly does. The harder question is whether the industrial stack beneath that demand can be assembled at the speed, density, and reliability the ambition requires. That stack is not reducible to GPUs or wafers alone. Advanced packaging, once easy to treat as a secondary manufacturing detail, is now a bottleneck in its own right. That is exactly the kind of intermediate dependency that turns “more compute” from a variable into a buildout.

The same point appears from another angle if one asks what actually delays large-scale deployment. It is rarely a shortage of desire. It is more often some intermediate dependency that fails to scale in step with the rest: transformer procurement, substation upgrades, fiber routing, water access, thermal constraints, specialized labor, zoning, or the long procedural tail of environmental and utility review. These are not incidental obstacles placed in the path of an otherwise autonomous technical process. They are part of the process itself. At sufficient scale, infrastructure is not merely the vessel into which compute is poured. It is the thing being built.

That has consequences for how one interprets advantage. “More compute” does not reward whoever wants it most. It rewards whoever can orchestrate the stack beneath it. That means actors with unusual access to power, capital, industrial partnerships, land, political clearance, and deployment capacity begin to enjoy a different kind of lead. Their advantage is no longer just that they can train a larger model. It is that they can turn abstract demand for intelligence into a functioning physical system more quickly and more repeatedly than others can. In that environment, the gap between having an idea and being able to instantiate it becomes strategically decisive.

Compute, then, should not be understood as a resource in the ordinary sense, like fuel sitting in reserve. It is closer to a throughput condition. What matters is not merely how many chips exist somewhere in principle, but how many can be fabricated, packaged, powered, cooled, networked, and kept running inside a coherent system. A vast nominal supply means little if some narrower stage in the chain becomes binding. In that sense, compute is less like a stockpile than a pipeline whose narrowest segment can determine the pace of the whole field.

Once that is clear, the strategic picture changes. Advantage can no longer be measured only in model quality, research talent, or benchmark performance. Those things remain real. But they now operate inside a more grounded contest whose decisive questions concern execution: who can secure power, who can acquire or build sites, who can move through permitting quickly enough, who can obtain fabrication and packaging capacity, who can coordinate networking and cooling at scale, and who can sustain all of it long enough to turn temporary lead into durable position.

This changes the meaning of AI leadership. In the earlier phase of the field, leadership could still be imagined primarily as a function of scientific or technical advance. The leading actor was the one that discovered something important first, trained a larger model first, or found a way to extract more capability from the same general substrate. That picture has not disappeared, but it has thickened. Leadership now increasingly resembles an infrastructural achievement. It belongs not only to whoever produces a breakthrough, but to whoever can embed that breakthrough inside a system capable of supporting repeated expansion, rapid deployment, and reliable operation.

That is a subtler and often more resistant kind of advantage. A clever idea can narrow a gap. It is harder to narrow a gap in power access, buildout speed, supply-chain coordination, or deployment density. Research advantages can sometimes be copied, adapted, or diffused more quickly. Infrastructural advantages are often harder to overcome quickly. They harden over time because each gain in capacity enables the next gain in capacity.

The cloud era did democratize access to compute in important ways. But the present buildout is different in kind. The binding constraints are increasingly not just rented access to hardware, but power, interconnection, packaging, siting, and the industrial coordination required to keep large systems running at scale. A company with easier access to sites, faster power procurement, stronger industrial partnerships, and denser deployment infrastructure does not merely possess more compute at a given moment. It acquires a better position from which to keep converting ambition into reality.

This is one reason the current phase of AI should not be described too casually as a race. A race suggests competitors running on a common track under shared conditions. That is not quite the situation here. The track itself is being built unevenly. Some actors can solve part of the infrastructure problem themselves. Others must absorb delay. Once intelligence becomes more dependent on large physical systems, those asymmetries begin to matter as much as the model itself.

The specific bottlenecks differ by region, but the broader pattern is the same: once intelligence depends on large-scale industrial systems, local infrastructure conditions start shaping strategic possibility.

The consequence is not simply concentration in the ordinary economic sense, though that is part of it. Industrial coordination becomes a source of leverage. The actor that can build and sustain larger systems can deploy more broadly, operate more densely, and stabilize more complex systems across a wider surface area. Physical capacity begins to shape what kinds of operational advantage can accumulate.

That point matters because it begins to reveal what all this buildout is for. If larger physical systems merely supported one-off training runs, the story would already be significant. What makes the present moment more consequential is that these systems are increasingly being built to support continual operation. This is not the profile of a sector treating power as a passive input. It is the profile of a sector reorganizing itself around continuous operation under real grid constraints.

Seen in that light, infrastructure is no longer just the means of AI progress. It is part of its governing logic. The firms best positioned to build, coordinate, and sustain industrial-scale compute are also the firms best positioned to define the tempo at which the field moves from one generation of systems to the next. Their lead is not only a lead in resources. It is a lead in the ability to turn resources into a persistent learning environment.

The same logic appears even in the field’s more speculative edge cases. It is tempting to read orbital data centers or off-planet compute as evidence that AI is escaping the ordinary limits of terrestrial infrastructure. In one sense, that is exactly what such visions promise: relief from grid congestion, land scarcity, cooling constraints, and political friction. But that is precisely why they matter here. They do not represent an escape from physicalization. They represent its extension.

This does not make orbital compute a near-term reality. It makes it a revealing symptom of how seriously terrestrial constraints are already being taken at the outer edge of planning.

The fantasy of escape is therefore also a confession. It reveals how seriously terrestrial bottlenecks are already being taken by actors thinking at the outer edge of scale. Even the most futuristic versions of this story belong inside the same frame. They do not overturn the argument. They sharpen it.

This argument would be weaker if the main bottlenecks remained predominantly algorithmic, if capacity expansion were cheap and widely accessible, or if efficiency gains consistently dissolved the strategic force of infrastructure constraints. The evidence increasingly points in the other direction.

Musk’s terawatt-scale fab announcement matters, then, not because it offers a dramatic new number for the field to admire or fear. It matters because it makes something newly visible. AI can no longer be understood adequately as a story of models in abstraction, still less as a contest defined only by parameters, benchmarks, or cleverness at the algorithmic margin.

“More compute” no longer names a clean increment. It names a buildout: fabs, packaging, substations, cooling, interconnect queues, construction timelines, supply chains, and the political permissions required to turn ambition into sustained operation. At sufficient scale, these constraints stop being background conditions and become explanatory forces. They help decide not only what can be built, but who can build it, who can scale it, and who can remain ahead once intelligence becomes industrial.

This is the deeper significance of the shift now underway. The systems surrounding intelligence are becoming visible enough, dense enough, and strategic enough to shape the competitive order of the field. Leadership begins to look less like isolated brilliance alone and more like the ability to align research, power, fabrication, cooling, networking, land, and deployment into a coherent industrial stack.

And once that becomes clear, a further question follows almost immediately. What, exactly, is all this infrastructure being built to sustain? If the answer were only larger one-off training runs, the story would already be consequential. But that is no longer the whole answer. Increasingly, this buildout is organized for something more continuous: systems designed not merely to train intelligence, but to keep refining it under constant operational pressure.

The next question is what, exactly, this buildout is being organized to sustain.